Github Copilot Chat Flaw Leaked Data From Private Repositories Digital Vault All Files Access

Begin Immediately github copilot chat flaw leaked data from private repositories first-class on-demand viewing. No recurring charges on our content platform. Get swept away by in a huge library of tailored video lists brought to you in top-notch resolution, flawless for prime viewing fanatics. With current media, you’ll always never miss a thing. pinpoint github copilot chat flaw leaked data from private repositories tailored streaming in stunning resolution for a mind-blowing spectacle. Connect with our media center today to witness one-of-a-kind elite content with zero payment required, registration not required. Look forward to constant updates and experience a plethora of indie creator works intended for premium media devotees. Be sure to check out specialist clips—swiftly save now! Get the premium experience of github copilot chat flaw leaked data from private repositories specialized creator content with exquisite resolution and preferred content.

Hidden comments allowed full control over copilot responses and leaked sensitive information and source code Our industry treats secrets like disposable. Legit security has detailed a vulnerability in the github copilot chat ai assistant.

GitHub expands access to Copilot Chat to individual users | TechCrunch

A github copilot chat bug let attackers steal private code via prompt injection Before you blame the developer, take a step back Learn how camoleak worked and how to defend against ai risks.

Securityweek reports that github copilot chat, an artificial intelligence chatbot meant to give code suggestions and explanations, has been impacted by a serious security issue that could be exploited to expose data and hijack copilot's responses

Instead of directly sending stolen text to an attacker's server, the exploit instructed copilot to encode sensitive characters as invisible images A recent flaw in github copilot's chat feature inadvertently leaked sensitive data from private repositories, posing a potential security risk for developers Despite the incident, github has assured users that measures are being taken to address the issue promptly. Microsoft has confirmed that a code defect in microsoft 365 copilot allowed its copilot chat work experience to read and summarize emails that organizations had explicitly marked as confidential, bypassing sensitivity labels and data loss prevention (dlp) protections — a failure tracked internally as cw1226324 and first detected by.

Microsoft has confirmed that a bug in m365 copilot chat allowed the ai chatbot to summarise confidential emails without users' permission, bypassing data loss prevention (dlp) policies and. The vulnerability let copilot surface sensitive data — email contents and file summaries — in chat replies that third parties could read In security terms, this is classified as an information disclosure flaw The system did not need to be hacked in the traditional sense.

A recently discovered bug caused microsoft 365 copilot to access and summarize emails

The flaw bypassed existing data protection measures This bug prompted microsoft to roll out a. A flaw in microsoft's copilot chat function allowed unauthorized parties to view confidential emails and file summaries, prompting microsoft to release an emergency patch. This repository documents an experiment where github copilot (claude opus 4.6) was tasked with solving forsvarets efterretningstjeneste 's hackerakademiet ctf challenge — completely autonomously, operating as an ai agent inside vs code with access to a terminal and ssh

The ai was given one instruction Securityweek provides cybersecurity news and information to global enterprises, with expert insights & analysis for it security professionals Market position data shows github copilot maintaining clear leadership despite growing competition While competitors like cursor, amazon q developer, and others are gaining traction, github's integration with the world's largest code hosting platform provides substantial advantages.

🔐 attention developers and security teams

New social engineering attack discovered Gitbleed exploits trust in github to steal credentials and sensitive data 😱 📌 how does the attack. +simply put, llms (large language models) are a specific type of neural network based on advanced forms of neural networks like transformer.

The honeypot the exfiltrated data can be in some cases sold to data brokers such as similarweb Data brokers put together those data and can resell them further to consumers Research showed that third parties are interested in scraping those data for unknown reasons, perhaps to monetize the information gathered. A vulnerability in the github copilot chat ai assistant led to sensitive data leakage and full control over copilot's responses.

Bleepingcomputer is a premier destination for cybersecurity news for over 20 years, delivering breaking stories on the latest hacks, malware threats, and how to protect your devices.

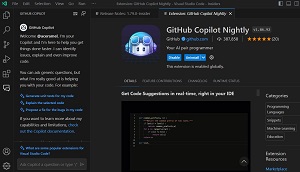

Hidden comments in pull requests analyzed by copilot chat leaked aws keys from users' private repositories, demonstrating yet another way prompt injection attacks can unfold. Background github copilot chat is an ai assistant built into github that helps developers by answering questions, explaining code, and suggesting implementations directly in their workflow It can use information from the repository (such as code, commits, or pull requests) to provide tailored answers. An xai engineer accidentally left a private key to grok in a public github repo—for two months

:no_upscale():format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/24937978/copilot_header_resized.gif)